The AI "Writing Help" Trap: A Game to Show How AI Can Silently Alter Legal Documents

I made a game to help attorneys understand the situation of the attorney in Kosel Equity v. MacGregor, a recent Connecticut case: using other tools for research,[^1] then generative AI for writing assistance, and how it may be difficult to spot when “AI…intuitively [makes] changes to the brief.” This is an interactive demonstration of the unexpected risks of asking generative AI to "clean up" a legal draft.

I actually used generative AI to help me write the code for this game, which puts me at some of the same risks, since the text is mixed in with the code. So, I reviewed the output and manually edited the resulting files to remove things when the coding agent had gone beyond what I had told it to say. This proved to be pretty frustrating at times, but was a helpful meta-lesson from the project. If you do spot errors in the game, please feel free to email.

Game Scenario: Make the Draft Motion “Better”?

I frequently warn, including in my Ethics CLE, that LLMs can introduce significant errors even though the “cleaned up” draft might look better at first glance.

The game scenario is simple and realistic: an attorney has a motion for summary judgment with some formatting issues, extra spacing, and a few typos. The attorney either pastes the draft into an AI assistant and says "clean this up,” then pastes the result back into their word processor. Or the attorney uses an integrated LLM like the ones in Copilot in Microsoft Word, or Apple Pages, or Grammarly, etc., to change the draft in place. Or a paralegal or intern or someone else the attorney supervises does this without the attorney’s knowledge.

As you will see in the game, the AI does clean up the draft. But it also silently makes material changes that are not correct.

How to Play (Scroll Down for Game)

- First, you’ll see the “before” draft motion.

- Second, you’ll “fix” it with AI and see the “after” version of the draft.

- Third, you’ll have an opportunity to try to click the spots in the motion where the AI made changes it shouldn’t have.

- Then you’ll get some suggestions for a different approach and what went wrong.

Real-World Parallel: Kosel Equity v. MacGregor (Connecticut Supreme Court, 2026)

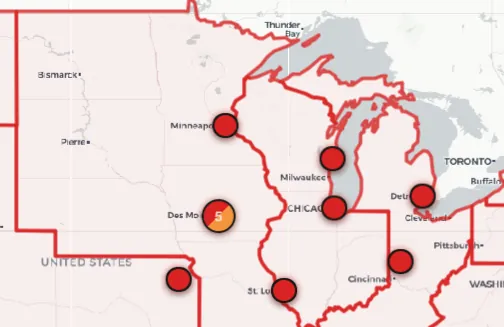

The scenario portrayed in this game is not hypothetical. I discussed it in my CLE in my Ethics CLE “AI Gone Wrong in the Midwest,” with content from December 2025 as it related to an expert witness and specifically warned about these risks. In February 2026, the Connecticut Supreme Court ordered counsel for the appellant Kosel Equity, LLC v. MacGregor to respond to questions about AI use after an errata sheet was filed to correct errors in the appellant's brief.

Counsel for the Appellant used Lexis for the legal research in the drafting of the brief. After the initial brief was drafted, Counsel used ChatGPT to assist in the organization and formatting of the content of the brief. This assisted with analyzing the brief to avoid duplication of arguments. After the initial drafting, I used AI to further assist with the organization, formatting and refinement of the brief, in particular, to assist with compliance with word count restrictions. It was not used as a substitute for legal research or an alternative to Counsel’s own work product. MEMORANDUM, February 19, 2026

And:

AI was also used to assist in reviewing the content of the brief in particular to comply with the word count restrictions. The errors identified in the errata sheet were corrected by manually checking the brief’s quotations and formatting against the underlying sources. Unfortunately, Counsel did not notice that AI had intuitively made changes to the brief prior to filing. MEMORANDUM, February 19, 2026