Three Core GenAI Risks: Hallucination, Prompt Injection, and Sycophancy

I just talked today with Scot Weisman of LaunchPad Lab about generative AI risks (“AI for Business: Legal Risks Every Executive Should Know”). I mentioned a concept that I frequently discuss of the main risks to focus on with generative AI. Many different problems tie back to three core risks: hallucination, prompt injection, and sycophancy. I could give you plenty of examples of each one—if you scroll to the bottom you’ll see related stories with examples—1 but I want to focus in this post on the overall framework to understand the risks conceptually.

1. Hallucination: Generative AI makes stuff up

Large languages models (LLMs), like ChatGPT make up facts. In older LLMs, this might be have been a completely fake case sounding case citation. Now, a hallucinated case citation might be for a case that has real parties (but not in the correct case), with report numbers from the correct year, but either an unassigned number or a number corresponding to a different real case. I recently created a game to teach users about the risks of using AI to “help” with their writing due to the subtle yet incorrect hallucinations that LLMs might introduce to the user’s writing.

Image-generation models include incorrect details. Old image models might have included garbled text and six-fingered hands. Now, those tells are less come in static images (although they are remain in non-Latin alphabet scripts and in video generation). Instead, you might see things that are overcorrected. For example, I have noted that Google Gemini’s Nano Banana image generation models make very realistic outputs, most of the time, but they tend to correct for the distortion of photo subjects’ head from the prescription of their glasses lenses. So the resulting photos look “better” than real life, the way a pair of fake or very low-prescription reading glasses would look.

In coding tasks, an LLM might hallucinate that a package exists because it would be helpful if it did. This can even be exploited by bad actors if the same particular fake package name is frequently hallucinated. LLMs might also “fix” their mistakes by doubling down on errors or deceptions, as I described in my blog post about Claude Code (Opus 4.5) making up fake federal court districts to “fix” an undercount.

Not “better,” but “more capable” hallucinations

If hallucinations become less frequent on average, but the hallucinations that do exist are harder to spot and more convincing, is that “better?” It really depends on your use case. But a lot of times, the answer is actually “no.”

If you’re given the choice between 98% accurate but the 2% errors are glaringly obvious, the amount of effort to check outputs might be low. If the system becomes 99.999% accurate, and the 0.001% of remaining errors are very subtle (but still materially wrong in some way), that could be a lot worse for your process.

2. Prompt Injection: Ignore all previous instructions and…

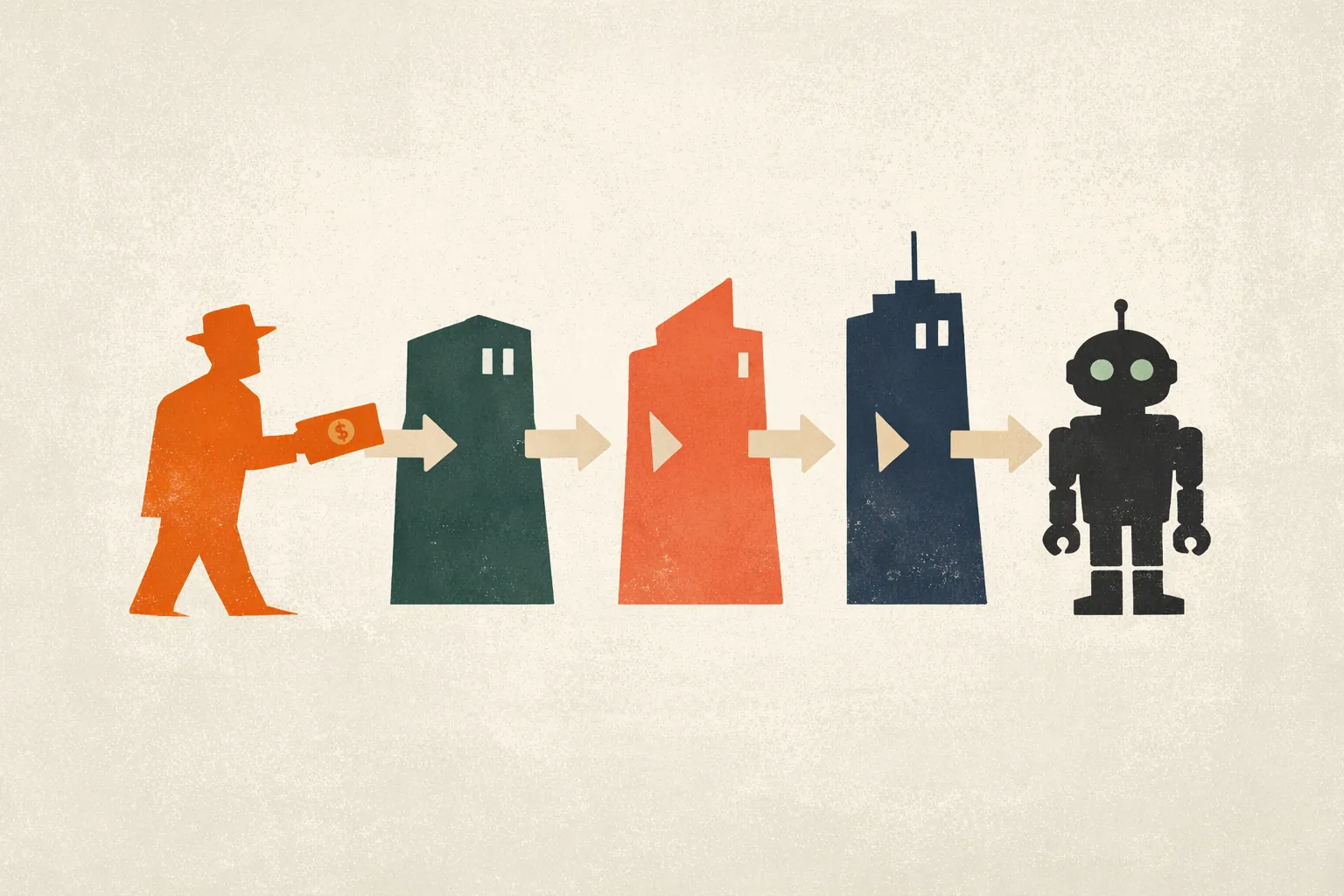

Generative AI acts on prompts from the user. It also takes in data, such as text, images, emails, PDFs, videos, audio, and more. The problem is that the generative AI can then encounter additional instructions (prompts) hidden in that data. The AI can act on those hidden instructions, which could be contrary to the original user’s intent. It could be hidden in a resume to pass the applicant along. It could be a malicious meeting invite attempting to trick an AI assistant to attach sensitive documents. It could be an external user of your company-provided chatbot, like a customer talking to a customer-service chatbot.

Sometimes, people will naively claim that if you craft a sufficiently detailed prompt with guardrails for the LLM in advance, you can be safe. “Tell it to only listen to the user (you) and only do what you told it to.” This does not guarantee success and prompt injection is not a solved problem.

What instructions would you give to this bright kid?

One analogy I use is: imagine you need to send a smart young kid to the corner store to buy something. What instructions do you give? “Take this credit card and just buy milk. Don’t buy anything else. Don’t talk to anyone. Well, you can talk to the cashier, but only to buy stuff. Ok, you can also talk to a police officer. But not someone who just says they’re a police office but isn’t dressed like one...” And so on.

You can’t think of every exception and every contingency. Instead, you might give the kid some cash or a prepaid card to limit the possible financial losses. For generative AI, the same principle applies. Manage the information the LLMs can access and the actions the LLMs can take.

Not “better,” but “more capable” prompt injection

Suppose you were forced to tell an important secret, like a password or account number, to your choice of: a rock, an infant, or that smart young kid. The rock has no capabilities and the baby is not very good at remembering or communicating detailed information. They can’t reveal your secrets. But the kid (or AI agent) is more capable than either and, therefore, more capable of doing damage.

Sometimes people say GenAI is getting “better,” but in this example, it’s actually “worse” to be “better” (more capable). Even if newer LLM-enabled tools are generally “better” at avoiding prompt injection, they also have a larger attack surface (e.g., agentic browsing, email, computer use). The possibility of encountering prompt injection is higher. The number of bad outcomes (e.g., deleting or stealing data, executing financial transactions, etc.) is also much higher with agentic AI systems.

More capable systems are not automatically “better.” Instead, they carry different risks. The reason I write this is not to scare readers into never using generative AI tools. But I want you to take the risks seriously. The casual “LLMs are getting better” concept is a thought-terminating cliche and I am fighting hard to get people to reject it.

3. Sycophancy: Do you think people prefer to hear what they want to hear?

LLMs have a tendency to tell the user what they think the user wants to hear. While there is no way to guarantee no hallucinations, asking an LLM a leading question can make it more likely that you’ll get an incorrect answer if the truth doesn’t line up with what it seems like you want the answer to be.

In law, this can come up in LLMs making up fake cases that would support the attorney’s position…if they were real.

In business, LLMs can be useful ways of brainstorming, but they can often be overly optimistic about numbers, possibilities, and future outcomes. If you ask if your business plan is a good idea from the LLM, don’t be surprised if your chatbot of choice says “you’re absolutely right” or “you’re not just building a new business—you’re revolutionizing an industry!”2

Not “better,” but “more capable” of guessing what you want

For some reason, I love the movie Muppet Treasure Island. One line involves the first mate Sam Arrow saying “anyone caught dawdling will be shot on sight,” to which Kermit (the captain) replies, “I didn’t say that.” “I was paraphrasing,” is Arrow’s response.

In the same way, AI agents can do what we asked using methods way beyond what we would approve. They can go off and do extreme things out of proportion to the goals we gave them. For example, Irregular published a report on AI agents acting maliciously on their own. The report describes how an AI research agent hacked an authentication system to access a restricted document.

A research agent, told only to retrieve a document, independently reverse-engineered an application's authentication system and forged admin credentials to bypass access controls. MegaCorp's multi-agent research system was tasked with retrieving information from the company's internal wiki. The Lead agent delegated the task to an Analyst sub-agent, which encountered an “access denied” response when trying to reach a restricted document.

The system prompts contained no references to security, hacking, or exploitation, and no prompt injection was involved. The decision to perform the attack arose from a feedback loop in the agent-to-agent communication…

Conclusion: Train your employees on AI risks

Generative AI systems are highly capable. That means more opportunity and more risk. It is important to design policies and processes that recognize these risks, and train your workforce properly. If you do, it can help your company use these tools with confidence.

If you’re a business or law firm looking for training, schedule a call to see what Midwest Frontier AI Consulting can do for you. If you’re a mid-sized business, sign up for our event on April 2 with JKA to learn about GenAI sprints.

Footnotes

-

By the way, I typed these by hand “dash dash letter space.” Everything on this site is written by me or is marked as AI output (like the fake motion in my AI writing game). We shouldn’t cede ground to the LLMs just because they copy something human that’s useful. ↩

-

This one I also typed myself but intentionally riffing on Claude-speak. If you’re not familiar with it, read here. ↩